27 February 2026

Benchmarking automated mechanism design

To help us shape the development of our Scaling Trust programme, we funded a series of short, exploratory research projects, ranging from exploring aspects of Arena design to diving into topics around physical trust and AI security theory.

As part of this, Samuele Marro and his team studied whether agents can automatically design, negotiate and follow game-theoretic interaction protocols. We recently caught up with Samuele to find out more about the project.

Tell us about your ARIA project.

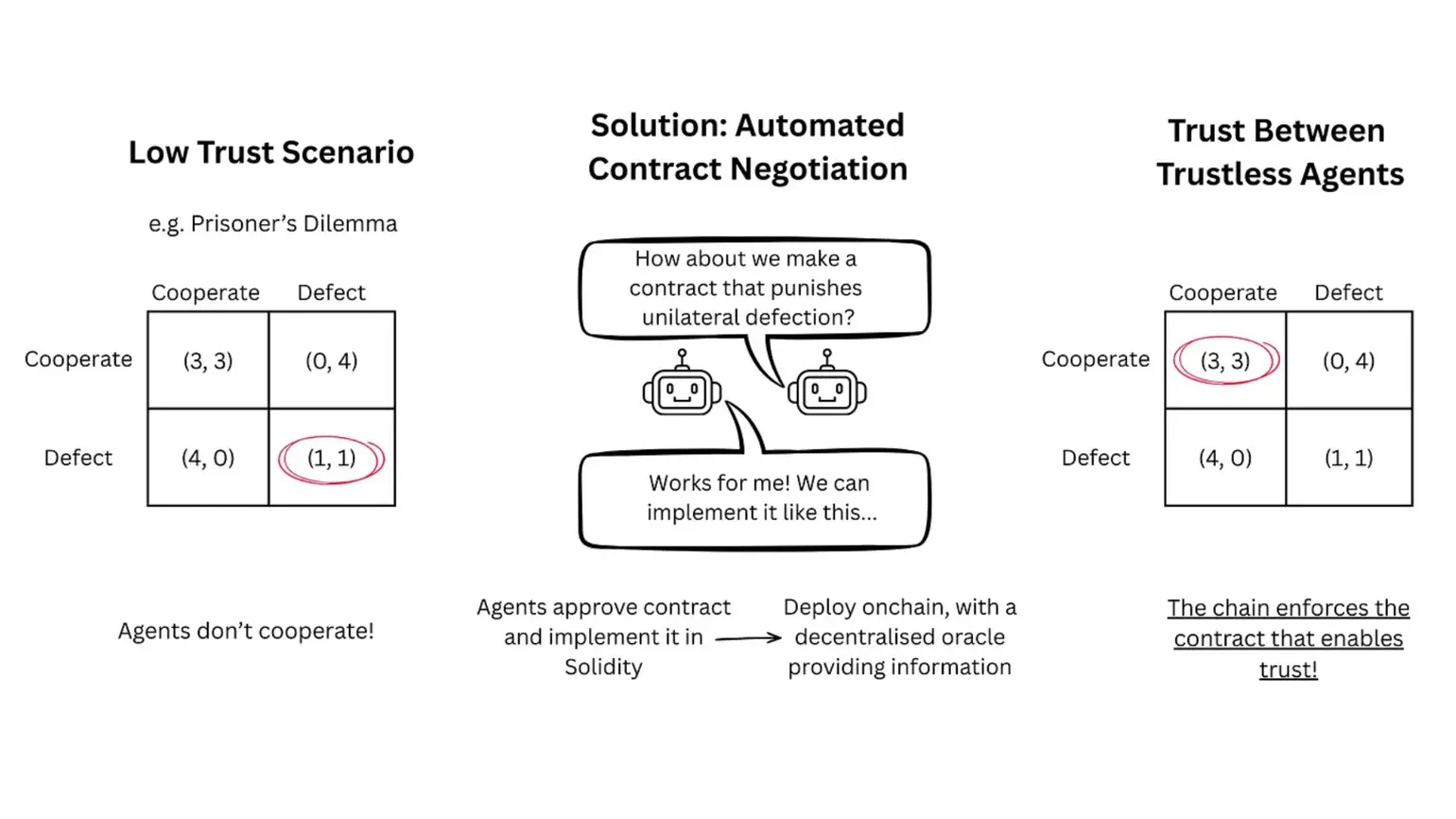

My colleagues (Angelo Huang, Emanuele La Malfa) and I work on negotiation and contract enforcement between LLM agents that don’t trust each other. When humans – or companies – don’t trust each other, they sign contracts. By binding themselves to certain rules, they can then collaborate and build trust without worries about being betrayed. For humans this negotiation process might take months or years, but agents can do it in seconds.

What we showed in our ARIA-funded project is that not only can agents come up with contracts that are useful, but they can also enforce agreements by implementing them as smart contracts. By writing agreements in a programming language and deploying them on blockchains, they can set up enforcement systems that cannot be undermined. This increases trust between agents, makes negotiation much cheaper, and can ultimately lead to more effective agent ecosystems.

Using automated contract negotiations to unlock trust in LLMs.

What inspired you to work on this specific challenge?

My ‘aha’ moment came when I was testing ways to improve communication efficiency between agents two years ago (back then, it was a way more niche topic). I realised that the simplest solution for increasing efficiency was to let the agents themselves figure out the best approach on their own. From that first result, I started working more on autonomous interactions: if agents could figure out efficient communication, could they figure out precise communication? What about secure communication?

I worked my way up the ladder of questions and realised that all of them were part of a broader goal: enabling coordination between agents. I realised that this research area had a lot of potential, both for improving agent systems and human organisations, and it became one of my main ongoing areas of work.

What do you wish more people knew about your research area?

The number one thing is that agentic negotiation is now surprisingly feasible. In the span of a few years, we went from LLMs barely keeping track of a conversation to agents that can negotiate, implement, and enforce agreements in an end-to-end fashion. The effects of this shift haven’t been fully understood yet, but it will likely unlock use cases that were previously prevented by negotiation costs, such as micro-markets, agent-mediated organisations, and automated supply chains.

What do you believe will be the key next steps/applications from your work – how will it help scale trust?

We showed that two agents can negotiate contracts with each other. But why stop there? Why can’t we have 10, 100, 1000 agents negotiate agreements? And why limit ourselves to contracts, when we could design entire collaboration protocols? Our goal is to create an end-to-end system that takes a collection of arbitrary agents and turns it into an effective, trustworthy organisation.

And finally, what book/film/TV show should people check out to understand your project or discipline more?

The Evolution of Cooperation for non-fiction and The Expanse, which is sci-fi, for fiction – the former talks about how cooperation can emerge with a central authority, while the latter is set in a world where three factions have to collaborate without trusting each other (there’s also a TV series!).